Logistic regression is used to classify things into positive case or negative case. It is a special case of linear regression which outputs value between 0 and 1, which denote the probability or the likelihood of the given sample being positive. For e.g. what is the probability of the given email being a spam? We can then predict a positive case if the hypothesis outputs a value above a certain threshold, which normally is 0.

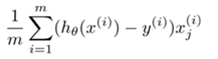

Logistic regression